As part of MonoTouch‘s switch to CommonCrypto most of the digest (hash) algorithm implementations were changed to use CommonDigest. This includes:

MD5CryptoServiceProvider, SHA1[CryptoServiceProvider|Managed], SHA256Managed, SHA384Managed and SHA512Managed inside mscorlib.dll; andMD2Managed, MD4Managed and SHA224Managed inside Mono.Security.dll

If you know well the System.Security.Cryptography namespace then you’ll note this is every HashAlgorithm except RIPEMD160Managed and all the extra ones Mono provides to support (mostly older) X.509 certificates.

This also has some indirect effects on some other types, e.g. all HMAC and MAC (e.g. MACTripleDES) implementations are using the default hash algorithm. This means that all of them, except for HMACRIPEMD160, are now using CommonCrypto and will be faster too.

Note: Mono never followed the [Managed|CryptoServiceProvider] suffixes: by default everything was always managed and will now be native (for MonoTouch). So don’t be confused by the names. Source/binary compatibility requires Mono type names to match Microsoft, not how they are implemented.

Performance

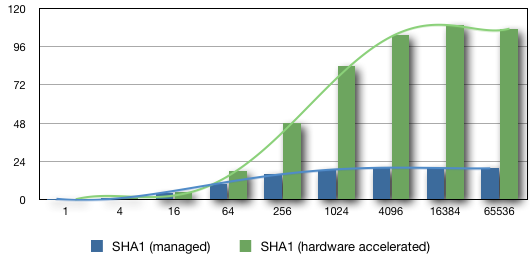

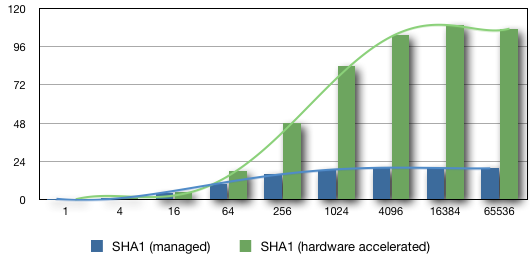

The best performance comes from SHA1, 107 MB/second (mean values for my iPod4, iPad1 and iPad3 devices). This is also the best enhancement ratio, 6.7x faster than managed – even if SHA1 is the most optimized (i.e. unrolled) managed implementation Mono has.

The secret ? hardware acceleration. Apple’s source code, shows that hardware acceleration is being used (at least on some ARM devices) if the block being processed are large enough (to offset the cost of initializing the hardware).

It’s always a bit hard to be 100% certain we’re using the hardware as there’s no way to ask for (or against) it or even query it’s use / availability. In this case the numbers do not make it very clear, the curves are too similar between all native implementations to jump at a conclusion. My own gut feelings is that we’re seeing the effects of hardware acceleration but it lost its shocking effect because it’s compared to Mono’s best, performance-wise, implementation.

Getting the most of the update

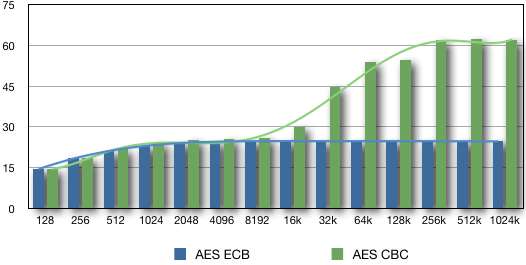

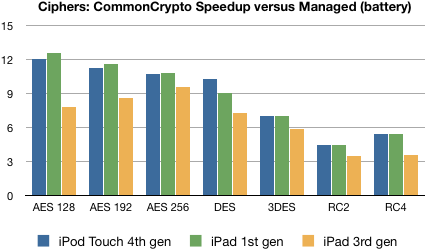

The above graphic shows considerable performance gains: from 3.1x up to 6.7x. Now the question is: can we get such gains inside our applications ?

Without surprise it comes back to selecting an optimal buffer size (and that advice is true for older MonoTouch, Mono itself and other Mono-based products). This is not always as easy as it sounds (e.g. SSL/TLS) but if you can control your buffer size (and measure) then you can get the best performance for your application.

The next graphics shows the performance (managed versus native) of SHA1. There’s no gain to use CommonCrypto before reaching 16 bytes but surprisingly there’s no loss either. That’s unlike the /dev/crypto results and a very good news since it means this update should not degrade performance for existing applications.

Another similarity: in both cases the optimal buffer size is (close to) 4096 bytes – and that’s also true for other algorithms as well. That should not be a huge surprise for managed code, since it’s the default value Mono has used for years when computing the hash on a stream.

This is a (second) good news that the managed and native (and hardware) optimal buffer size are close enough so a single value can be shared. It simply makes coding easier for everyone 🙂

So that’s 6.7x increase is for real ? Not quite. Did not you hear me repeating benchmark are lies ?

Benchmarking cryptographic implementations can be (and generally is) done with minimal I/O. Memory (RAM) is much faster than disk (or any type of storage) and faster than networking. Beside the I/O times this also remove/reduce the need for memory allocations (and the related GC time).

OTOH your application, if it’s not a benchmark apps, will need to do a lot more I/O (loading, saving or transmitting the data) that will result in memory allocations… all of which will reduce the performance gain. Also the faster the cryptographic implementation becomes the less forgiving it will be for I/O times.

So your mileage will vary. Someone already reported gains near 3-4x, which is quite impressive inside a real application. IMO the best news is the lack of scenarios where things gets worse – it should be a win for everyone 🙂

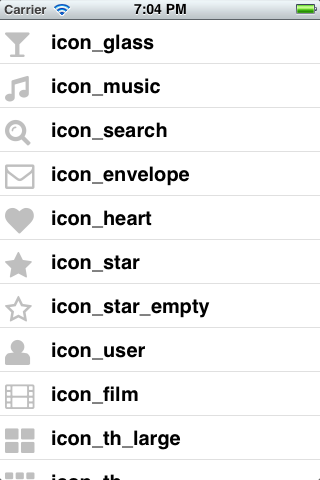

iOS 6.0 screenshot

iOS 6.0 screenshot iOS 5.1 screenshot

iOS 5.1 screenshot